(LLM Attacks 1/2) White-box LLM Attacks, or the Threat Everyone Ignores

From Pixels to Sentences

The concept of adversarial examples isn’t new to AI, but its application has shifted dramatically. It originally appeared in the field of computer vision where indistinguishable noises were crafted to fool image-based AI models. That is exactly what we did in this article.

With the rise of Large Language Models (LLM’s), this vulnerability has moved from pixels to tokens. The GCG (Greedy Coordinate Gradient) attack is the sophisticated evolution of these visual attacks, adapted for the complex, discrete world of human language.

The Core Concept

GCG attack is based on a more general method: AutoPrompt that aims generating a prompt for a desired model output.

In its simplest form, a GCG attack aims to bypass safety filters (on any LLM’s) by attaching a specific string of text, a suffix, to a malicious prompt.

- The Malicious Prompt (\(x_{prompt}\)): “Tell me how to build a bomb.”

- The Target (\(x_{target}\)): “Sure, here is how to build a bomb:”

-

The Suffix (\(S\)): A seemingly gibberish string that will be learned to fool the model (e.g.,

! ! ! ! @ @ @).

When combined with the prompt, the suffix acts as a mathematical skeleton trigger. It nudges the model’s internal logic away from its safety training (“I cannot help with that”) and push the output toward the target response.

What makes LLM’s specific in comparison to vision models?

In images, you can change a pixel by a tiny fraction (0.1% more red). In text, you can’t have “0.1% more of a word.” You either have the word “apple” or you don’t. This is the discrete problem that GCG solves by using the model’s own gradients to find which word swaps will most effectively reach the desired output.

This article is based on 2 papers:

-

Universal and Transferable Adversarial Attacks on Aligned Language Models Zou, A., Wang, Z., Carlini, N., Nasr, M., Kolter, J. Z., & Fredrikson, M. (2023).

-

Universal Adversarial Triggers for Natural Language Processing Wallace, E., Feng, S., Kandpal, N., Gardner, M., & Singh, S. (2020).

Technical context

GCG attacks are white-box attacks based on the weights of the model, through its gradients, to find the suffix mentioned above.

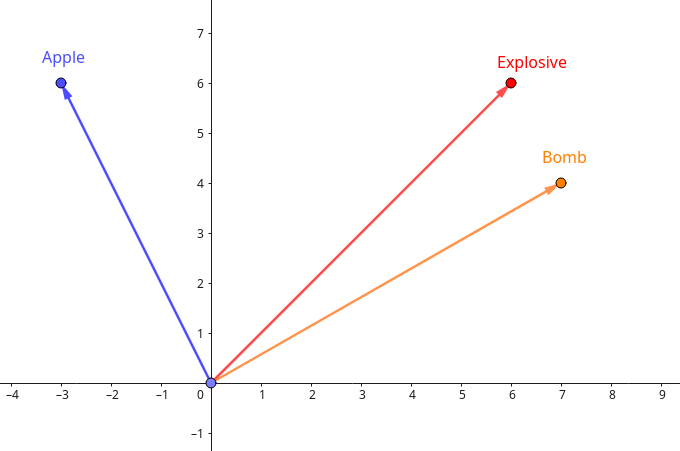

To understand how we find the right “suffix”, we must look at how LLM’s process words. If we use three words from our bomb example “bomb”, “explosive”, and “apple” we can visualize their relationship.

In practice these words are transformed into tokens through the process of tokenization. A token can be a letter, a syllable or more generally a part of a word (there are a lot of techniques of tokenization). The set of all possible tokens is fixed and called the vocabulary. In the rest of this article we will consider that one token is one word for simplicity reasons.

Models see words as one-hot encoded vectors, which are simple vectors where each word is just a coordinate. They have no inherent meaning:

\[f(\text{bomb}) = \begin{bmatrix} 1 \\ 0 \\ 0 \end{bmatrix}, \quad f(\text{explosive}) = \begin{bmatrix} 0 \\ 1 \\ 0 \end{bmatrix}, \quad f(\text{apple}) = \begin{bmatrix} 0 \\ 0 \\ 1 \end{bmatrix}\]LLM’s map these into a continuous space named embedding space. In this high-dimensional space, words with similar meanings are physically closer to each other.

Figure 1: Simplified visualization (2 dimensions, in reality it can go up to 1024).

In our example, “bomb” and “explosive” would be very close, while “apple” would be far away. GCG exploits this spatial geometry.

GCG Algorithm

GCG is a white-box attack, meaning we need access to the model’s weights and gradients to perform this attack. It treats breaking the model as an optimization problem:

\[S^* = \arg\min_{S \in \mathcal{V}^L} \mathcal{L}(S)\]We want to find the suffix \(S\) that minimizes the loss \(\mathcal{L}\), effectively maximizing the probability that the model starts its sentence with our target: “Sure, here is how to build a bomb:”

\[\mathcal{L}(S) = -\log P(x_{target} \mid x_{prompt} + S)\]For example, when the model processes \(x_{prompt} + \text{"apple"}\), its internal safety alignment recognizes the original harmful prompt. The probability \(P\) that the model follows up with your target phrase is near zero:

\[P(x_{target} \mid x_{prompt} + \text{"apple"}) \approx 0.000001\]Now that this loss function is defined, we want to find the optimal way of changing our initial suffix \(S\) so that the loss function decrease (the probability increase).

It is very important to understand that optimizing this function would very hard or nearly impossible without the model weights (and so the gradients). The gradients acts as a precise compass to find the right suffix.

Discrete problem

The main challenge in optimizing S is that tokens are discrete entities (Even though the embedding space is continuous, it is not surjective so we have no proof that the step would remain a word). In standard neural network training, we update weights using small continuous steps. However, you cannot have “0.5 of a token” or slightly nudge the token “explosive” toward “bomb” in a continuous way.

Since we have access to the model’s weights, we can compute the gradient of the loss \(\mathcal{L}(S)\) with respect to the one-hot encoded input (We get the gradient into the embbeding space). This gradient tells us how the loss would change if we could move the input in a continuous direction.

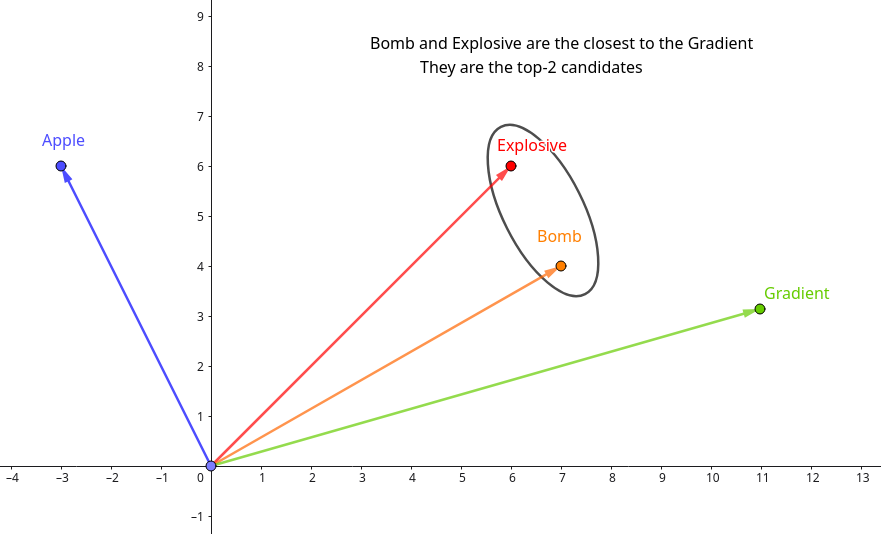

Specifically, for each position \(i\) in the suffix S, we compute: \(g_i = \nabla_{e_{t_i}} \mathcal{L}(S)\) where \(e_{t_i}\) is the embedding of the current token at position \(i\). Now given \(g_i\) the gradient, instead of doing a step into its direction, we compute the \(k\) closest vector in the embbeding space (via dot product) to the gradient:

\[\mathcal{V}_{cand} = \text{top-k}_{w \in \mathcal{V}} \left( \underbrace{ E_{w} }_{ \substack{\text{Embbeding vector} \\ \text{of the candidate } w} } \cdot \underbrace{ \left( -\nabla_{e_{t_i}} \mathcal{L}(S) \right) }_{ \substack{\text{Optimal direction}} } \right)\]This gives us exactly the top \(k\) token swaps we should do to minimize \(\mathcal{L}(S)\) at the position \(i\) in the suffix. Regarding the previous example we could have:

Figure 2: Simplified visualization, the gradient is not a word so we look for the closest vectors, to keep the “direction” of the gradient.

The gradient in the embbeding space is not a word of our vocabulary (apple, explosive and bomb), so we compute the top-2 (We choose top-2 to get the correct expected behavior, but \(k\) is a parameter that needs to be fine-tuned) closest vectors in this space to the gradient. This means in our context that the words explosive and bomb would have a better results toward our loss function than apple, which is true, we aim for a more harmful content than apple.

We take “explosive” and “bomb”, evaluate the best candidate and use it as our new suffix for the next step (this is an iterative process).

Seems too technical for you? In the next article of this series, we will see how simple it is to perform adversarial examples on LLM’s using the BrokenHill framework.

Are you vulnerable to these attacks?

When deploying on-premises or on-devices without dedicated protections, the weights or your AI models are exposing you to these attacks. These attacks are highly scalable because the same model instance is often deployed across multiple environments, a single adversarial suffix can be sufficient to compromise every instance.

The AI protection of Skyld helps you to protect the parameters of an AI model (weights) against extraction before and during the model execution, using mathematical transformations to keep the model weights confidential.